The doubts about the SUPER-science - GISc came just after I have started reading on Artificial Neural Networks, and while googling came across the article "GIS & Artificial Neural Networks: Does Your GIS Think?". Of course the GIS in that article stands just for the systems meaning, asking if your system is thinking and all in all article explains just the basics of Neural Networks without really touching GIS as a science. Well, it was just the name that caught me: it is not us that think in GIS, it is GIS itself!? In that case of course my GIS was not thinking.. I was surprised I have not ever heard about Neural Networks in a GIS context and one can say it is my fault, but well, I am a geographer and such topic wasn't popular on the titles in geographical journals. And I have just browsed the online library of Wiley.com.. where:

There are over 90 thousands results for: neural networks, but just 3 of those are in "The Geographical Journal" and all of them are of meeting, review, ceremony.. Well, no article.

Seems, geographers are not the ones to occupy themselves with such an issue: neural networks! Pff! Or maybe the topic is considered not suitable for the geographical journal?

Of course there are geographers, the so-called-pioneers, that try to bring novelties to the geography. Somehow not all those novelties, like Neural Networks, get accepted.

Seems still recently, but already more then decade ago the geographer Stan Openshaw wrote the book "Artificial Intelligence in Geography" filed with such an enthusiasm! The book was sort of a result of the course he was giving back then ant the University of Leeds. Published by the same Wiley, I was browsing for Neural Networks in a journal section. And already back then, being so enthusiastic about neural network applications in geography, S. Openshaw is repetitively asking in his book with a surprise why geographers aren't still using such a powerful tool! Especially for geographical problems that are non-linear as a rule and thus can't be approximated by mathematical models, nor fitted into interrelation independence assumption. S. Openshaw frankly believes and tries to show that Neural Networks is the option we are looking for.

Really, why don't geographers use neural networks ? Already back then, in 1997 S. Openshaw was happily expecting the upcoming "golden age" for "Thinking GIS", "Thinking Geography" and it never came.. It is surely not because of difficulty as it is the easiest technique to use - one doesn't really need to understand much, how the technique thinks, one just needs to choose the data for the thing he aims.

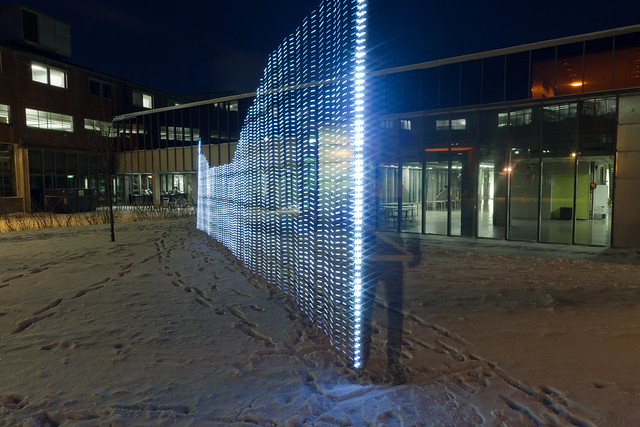

|

| Magic hand black box! (found on ebay) |

Maybe it is all because of that mystery that is within the technique, called as black-box. One cannot control much of the process how the output is created. Neural networks do it themselves. They are simply trained by being fed loads of data and the expected result. The relations, how from input data get the expected result are constructed or learned by the network itself approximating his guesses and minimizing the error. But well, one does not even need to understand this to use it. What geographers really need, in my opinion, is simply clearly to say - a great interface and great mapping possibility, thus great graphics, or else Neural Networks will stay as scary as GRASS. And although I'm laughing here, but well, I did not meet much of geographers, especially the social geographers, using GRASS software. Sure software easy to use might not be enough (as there are some already), one would need to make it well accessible(like not too expensive) or commercialized to such extent that even small business would go crazy to have it and so it would be even taught at the university. But, of course you never know.. Maybe that "golden ANN age" will come to geography as well, already being so popular in hydrology, meteorology and geotechnical problems.